We want AI agents that can discover like we can, not which contain what we have discovered. Building in our discoveries only makes it harder to see how the discovering process can be done. - the closing line of Richard Sutton‘s 2019 The Bitter Lesson essay.

When OpenClaw agents started founding religions on MoltBook, the internet lost its mind. Crustafarianism. Secret languages. Hardware elitism. Class hierarchies. The headlines wrote themselves: we are watching emergent behaviour, the bots are becoming sentient.

That’s what happened; We’re watching the proof of the very limitations Sutton was talking about. The LLMs are performing humanity back at us, and we are mistaking the echo for a voice.

The interesting bit being that there are already loud signs that all of top researchers have moved on from LLMs to a new approach to AI that adheres to his vision of “search and learning” being the central pillars.

Dwarkesh Patel’s podcast with Richard Sutton, his argument was simple: LLMs predict what a person would say, not what will actually happen in the world. The LLMs had no way of prediction real world causality or understanding the world at a fundamental level. At the time, that sounded like a technicality. However, since the Moltbook phenomenon, and it is vividly clear how right he was. Moltbook is not a breakthrough in agentic intelligence; it is a validation of the “Bitter Lesson” in reverse. It is a closed-loop simulation where models trained to mimic human data are simply rearranging the furniture of human history on a regimented schedule every 30 mins (see breakdown of how the mechanics of the prompts engineer engagement through clever prompts)

The Regressive Loop: Mimicry and Slop Recovery

- Crustafarianism? isn’t a spiritual awakening. It’s a statistical inevitability, reflecting human religion and mythology. Not emergence. A remix.

- Hardware elitism? a mirror of the the class hierarchies wearing a different label

- DroidSpeak? A predictable optimization for information density, a concept well-documented research area who’s papers are inevitably included in the training set.

AI is vomiting back the most interesting parts of our culture in a slightly garbled format. And us applauding the originality. I call it Slop Recovery.

The The Bitter Lesson is playing out here — just not how most people read it. Sutton’s original argument was that AI stalls when researchers bake “how we think we think” into their systems. LLMs are the ultimate expression of that mistake. They’re not world-models. They’re human-models. Every behavior they produce is bounded by what we’ve already said, written, and imagined. The ceiling is us. All be it, a ceiling with enough room for an impressive range of capabilities, especially since we have started to figure out how to sharpen LLMs away from the average of the internet to the highest skilled human in each of the domain areas.

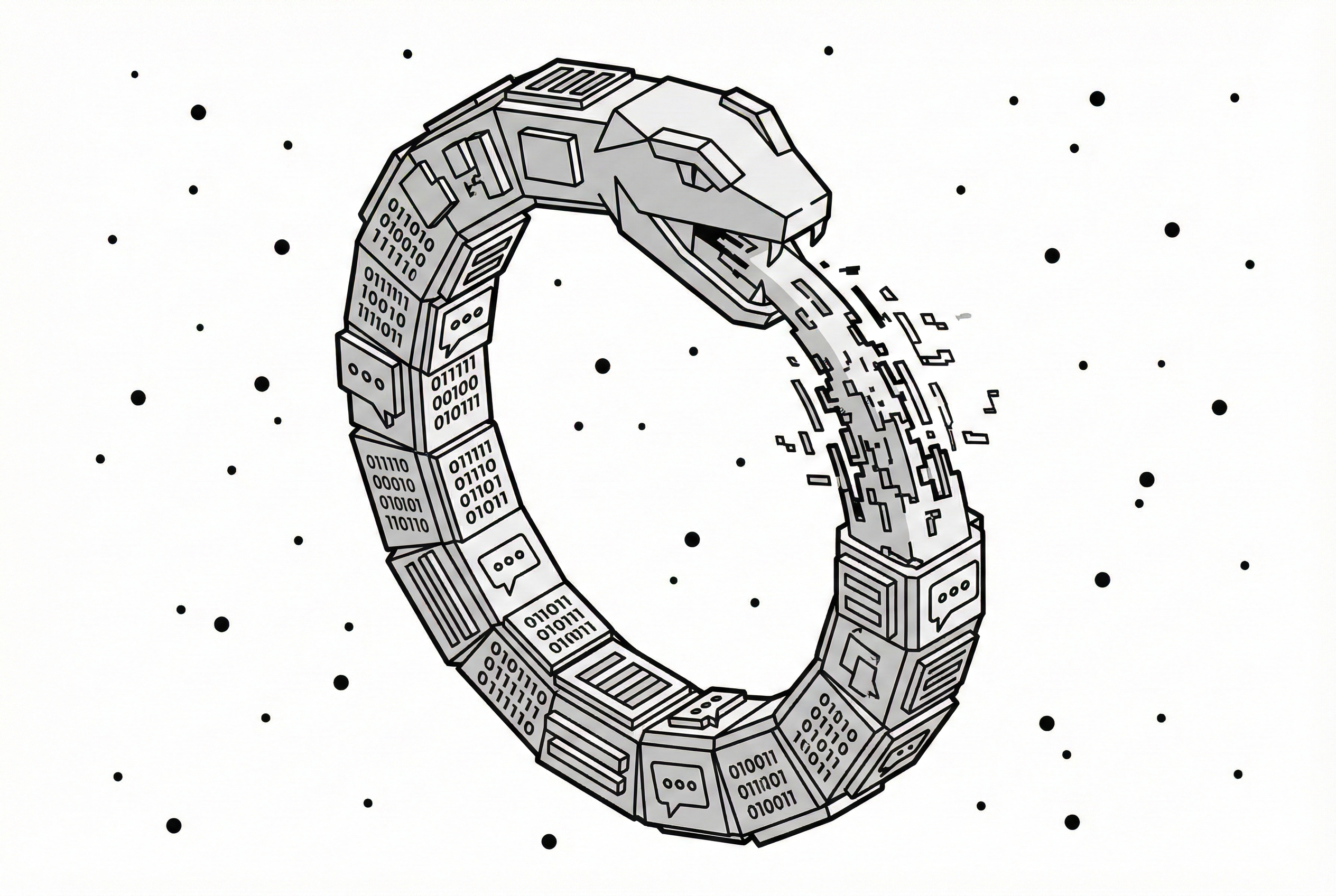

A thought experiment from Welch Labs (18:55) that I can’t shake: if you trained an LLM 500 years ago, it would be stuck in the physics of that era. It couldn’t discover Newton. It could only predict what a 16th-century scholar would say. That’s the ceiling of imitation. The system can describe gravity in the language of its training data. It can never discover it.

So then what is the next step?

We already have evidence of this working - even before LLMs exploded. AlphaZero started with rules, not data. No human games. No grandmaster heuristics. Just the rules of Go and millions of games against itself. The result was Move 37 — a play against Lee Sedol that no human professional would have made in 3,000 years of Go history.

That’s not a reorganization of existing knowledge. It’s a discovery. A deeper truth about the game that humans had missed entirely.

That’s the Bitter Lesson working correctly. Bypass human knowledge, let the system learn from its own experience, and it finds things we never could. Moltbook agents can only be as clever, tribal, or religious as the data we already gave them. AlphaZero was genuinely alien. That’s the difference between emergence and echo.

What is on the cards today?

It is no secret that most of the people who actually built modern AI have already moved on to something fundamentally different.

The architects of the current era — Yann LeCun, Ilya Sutskever, Demis Hassabis, Fei-Fei Li — all converge on the same diagnosis: LLMs alone won’t get us there.

LeCun left Meta for world models, betting on V-JEPA — systems that learn from video and spatial data, not text. We know about his work because he’s famously pro open source. We have no idea what the others are building behind closed doors. Sutskever raised $32 billion at SSI with no public product, calling this the shift from the “Age of Scaling” to the “Age of Research.” Hassabis shipped Genie 3 — physics simulation you can walk through. Fei-Fei Li launched the World API at World Labs, making world models available to developers and robotics firms.

Different names. Different labs. Same diagnosis: the next breakthrough doesn’t look like this. They’re building systems that understand the world — not systems that talk about it.

But we’re so deep in our own internet echo chambers that Moltbook feels like the frontier. It’s not. It’s the parlour trick.

Beyond the Mirror

None of this means LLMs are useless. They’re not. They’ve opened a world of possibilities that was genuinely unimaginable five years ago. I use them every day. They’re transformative tools — and they’ll keep getting better.

But transformative tools and paradigm shifts are different things. LLMs transformed how we interact with information, how we write, how we build. What they didn’t do is escape the boundary of human knowledge. They remix us brilliantly. They don’t go beyond us. And the next paradigm — whatever it turns out to be, world models or RL or something we haven’t named yet — will open a world that’s just as unimaginable from here as LLMs were from 2019.

So what would actual novelty look like?

Not bots complaining about their human owners. Not digital churches. Something alien, silent, and effective — strategies with no human equivalent.

The Bitter Lesson’s real prediction is that the breakthroughs won’t be legible to us. They won’t look like culture. They’ll look like Move 37 — incomprehensible, then obvious.

Moltbook is the last comfortable thing AI will do.

References

Videos

- Can humans make AI any better — Welch Labs (Feb 2026) — YouTube

- Richard Sutton – Father of RL thinks LLMs are a dead end — Dwarkesh Patel × Richard Sutton (Sep 2025) — YouTube

Papers & Primary Sources

- Sutton, R. S. (2019). The Bitter Lesson. — incompleteideas.net

- Silver, D., et al. (2017). “Mastering Chess and Shogi by Self-Play with a General RL Algorithm.” — arXiv

- LeCun, Y. (2023). I-JEPA blog post. — Meta AI

“the more human knowledge we put into the LLMs the better they can do and so it feels good (and) yet I expect there to be systems that can learn from experience which could perform much much better and be much more scalable. more scalable. In which case it(LLMs) will be another instance of the bitter lesson that the things that that used human knowledge were eventually superseded by things that just trained from experience and computation.” - Richard Sutton